⚡️ happy tuesday.

Sam Altman in October 2024: "Ads are kind of a last resort." Fourteen months later, OpenAI said this weekend: "We're testing ads in ChatGPT." The honeymoon’s over, folks. More on that below.

Today, we’re talking about:

Vercel’s AI sales agent saved them $2M

ChatGPT’s ad era begins

Vibe-code a building

Claude Cowork: no BS review

WTF is human-in-the-loop?????

Free masterclass: AI agents for dummies (join live 4 free)

📧 Reply w/ your take: Is design the bottleneck at your company?

We’ll Be Your Chief AI Officer

Tenex is the AI services firm behind this newsletter. We build software that moves the P&L. Our work sits at the intersection of engineering, operations, and real business constraints.

From 20 Sales Reps to 2 (+ The Agent Behind It)

the problem: Sales development doesn't compound. Headcount does. Every additional SDR brings another salary, another ramp period, another personal definition of what "qualified" actually means. The math is linear. The friction isn't.

the solution: At Vercel, GTM engineering leader Drew Bredvick replaced that sprawl with software. He built an AI agent that evaluates every inbound lead—researching the company, scoring intent, and routing only the credible opportunities to sales. Everything else is handled automatically.

the result: A sales development team reduced from twenty people to two, now focused exclusively on edge cases and high-touch accounts. And notably, the other eighteen weren't let go. They were moved into higher-value work elsewhere in the org.

here's the play in six steps:

Shadow your best performer

Pull 90 days of historical data

Iterate until 95% agreement

Run in parallel with people

Get the co-sign from leadership

Hand your top dog the controller

1. shadow your best performer

Sit next to your best SDR for a full day and document every decision—when they qualify, when they disqualify, every signal they check. You're hunting for the gap between what they say they do and what they actually do.

Drew's team found the real qualification criteria had almost nothing to do with the official rubric or process docs. Reps were checking LinkedIn profiles, scanning websites for tech stack indicators, and pattern-matching on how leads found Vercel. None of it was documented.

pro tip: Record screen shares with audio. You'll miss things in real time that become obvious on replay—the mouse movements, the half-second pauses on a company's About page. Take notes on every decision. You're building a truth table of inputs and outputs.

2. pull 90 days of historical data

Export 90 days of contact form submissions with outcomes attached. Did they close? Ghost? Become a $500K whale? This is your ground truth. Every prompt iteration gets tested against it.

3. iterate until 95% agreement

Open Cursor, Claude Code, or Windsurf—code editors with AI built in. You don't need to know how to code. Drop your CSV (spreadsheet export file) into a new project and start a conversation: "Look at this lead data. I'm going to give you a prompt to evaluate leads. Tell me if each one is qualified or not."

Or skip the blank-page problem entirely with Vercel's open-source lead processing agent. Connect it to your CRM, swap in your qualification criteria, and iterate from there.

Now grind it out. Run the prompt against a batch. Compare the agent's calls to what actually happened—not what humans decided, but whether the lead converted. Find disagreements. Fix the prompt. Repeat. You're aiming for 95%+ agreement with historical outcomes.

pro tip: Force the agent to reason before it decides. As Drew put it: "If you make it tell its reasons first, it'll be smarter."

human-verified prompt:

You are a lead qualification agent. For each lead, analyze the following signals and provide your reasoning BEFORE your decision:

Company signals: website quality, tech stack, company stage, employee count Intent signals: how they found us, what they asked for, urgency indicators Fit signals: ICP match, use case alignment, budget indicators

Structure your response as: REASONING: [Your analysis of each signal category] CONFIDENCE: [High/Medium/Low] DECISION: [Qualified/Not Qualified] NEXT ACTION: [Route to sales / Auto-respond / Request more info]

Be conservative. You naturally want to qualify leads to make humans happy. Resist that urge. A false positive wastes sales time. A false negative just means we follow up later.4. run in parallel with people

Your prompt works on historical data. Now prove it works live—and prove it to the skeptics.

Deploy the agent in passive mode. It processes every lead, makes a decision, but takes no action. Humans still do the work. You're building a paper trail of correct calls that skeptics can audit. Here's what to track:

agreement rate: Agent vs. human decisions.

accuracy rate: Agent vs. actual outcomes.

processing time: Lead received → decision made.

confidence distribution: How often the agent is certain vs. uncertain.

error log: When it got it wrong, and why.

The proving period will outlast the building period by a wide margin. Plan accordingly.

5. get the co-sign from leadership

Drew started with individual contributors on the ground—the people actually doing the work—and got them to validate that the agent was making good calls. Then he partnered closely with the functional leader for the SDR team, someone who would lean into AI rather than block it.

He let sales tweak qualification criteria, let marketing adjust scoring weights, and let ops define routing rules. When leadership builds alongside you, they stop being gatekeepers and start being advocates.

6. hand your top dog the controller

Flip the switch—but keep humans in the loop. The agent processes every lead, makes a qualification decision, and even drafts the response. But a person reviews before anything goes out. The system recommends; humans decide.

Drew's team went from 20 SDRs to two. Those two handle the genuinely ambiguous cases—the leads that even the agent isn't confident about. They're not doing less important work. They're doing the most important work.

The same loop works for any function where humans make pattern-matched decisions on structured data. Think: customer support triage, contract review, expense approvals, and even content moderation. The list is long.

A Technical Guy Teaches a Non-Technical Guy to Build an AI Agent

Guest: Tenex Co-Founders, Arman Hezarkhani + Alex Lieberman

Day: Wednesday, Jan 21

Time: 4:00 PM – 5:00 PM EST

Inside HubSpot: How Marketing Teams Actually Operationalize AI

Guest: HubSpot CMO, Kipp Bodnar

Day: Wednesday, Jan 28

Time: 4:00 PM – 5:00 PM EST

The key promises: ads never affect responses, are always clearly labeled, and conversations stay private from advertisers (meanwhile, Plus, Pro, Business, and Enterprise tiers stay ad-free).

Whether this is pragmatic business or a broken promise depends on how cynical you're feeling. But here's what matters: this is a response to distribution reality. Google's Gemini lives where questions already happen—Search, Chrome, Android, Workspace. As AI use becomes routine, the surface that captures attention shapes the economics. Ads in ChatGPT = the consequence of competing with a model that ships inside the internet itself.

the opportunity: Corporate advertisers don't move fast. They take six months to plan, six months to test before going heavy. Right now, ChatGPT has a zillion weekly eyeballs + not nearly enough advertisers. There's going to be a window—maybe 12–18 months—where you can acquire customers cheaply before the big players flood in. The same thing happened with early Google and Facebook ads. If you're running a paid acquisition motion, pay attention.

Google launched Personal Intelligence in Gemini this week. With one tap, Gemini can now connect to your Gmail, Photos, YouTube history, and Search history to reason across your data and return personalized responses.

The use cases are half legitimately useful and half novel. Ask, “Recommend tires for my car,” and Gemini pulls your car’s make and model from old emails, checks trip patterns from Photos, and suggests options—surfacing your license plate number ahead of an auto shop visit.

It’s opt-in, so you can choose which apps to connect, and Google says it won’t train on your inbox or photo library directly.

But still, this is the most aggressive “your data as context” move from any major lab. For business users, imagine an AI that already knows your calendar, email threads, travel patterns, and project history.

Google’s own warning during this testing stage: “Gemini may also struggle with timing or nuance, particularly regarding relationship changes, like divorces.”

Cool, cool, cool.

Claude, paired with Unreal Engine (a tool for VFX movie makers/game designers) via MCP (a way to connect AI to external tools), can now create 3D buildings from a single prompt. Describe a house, get a rendered structure in Unreal Engine.

use cases:

Architecture firms visualizing client briefs in minutes

Real estate developers mocking up properties before breaking ground

Game studios prototyping environments at 10x speed

Interior designers iterating on layouts with clients in real-time

3D printing: generate .stl files for custom parts from plain English

HGTV-style "dream home" generators for consumer apps

There's no doubt AI speeds up product development. So are designers now the people everyone waits on?

You can vibe-code a working front-end in an afternoon, store your design systems in GitHub, and use tools like Google Stitch to generate code directly from sketches. One Webflow developer put it bluntly: designers are "doodling pictures of websites instead of just building them."

Figma's shipping AI features, but the stock tells a different story—down sharply since the 2025 IPO peak, as if the market's pricing in a world where the prototype is the product.

The question isn't whether design matters. It's whether the long-wait-time workflow still does. Is design a bottleneck at your company? Hit reply—we'll feature the best takes next week.

We took the new Claude Cowork for a spin to see how far Anthropic’s latest productivity tool actually goes. We used it for recruiting, web app development, and sketching out a marketing strategy—and it handled more of the real work than we expected.

In this session:

What it’s surprisingly good at

Where it still falls down

Why tools like this are going to change how knowledge workers spend their time

eli5: The phrase “Human in the Loop” gets thrown around like it's self-explanatory. It's not. HITL doesn't mean a person is doing the work. It means a person is watching the work get done… aka you, the human, are the nanny.

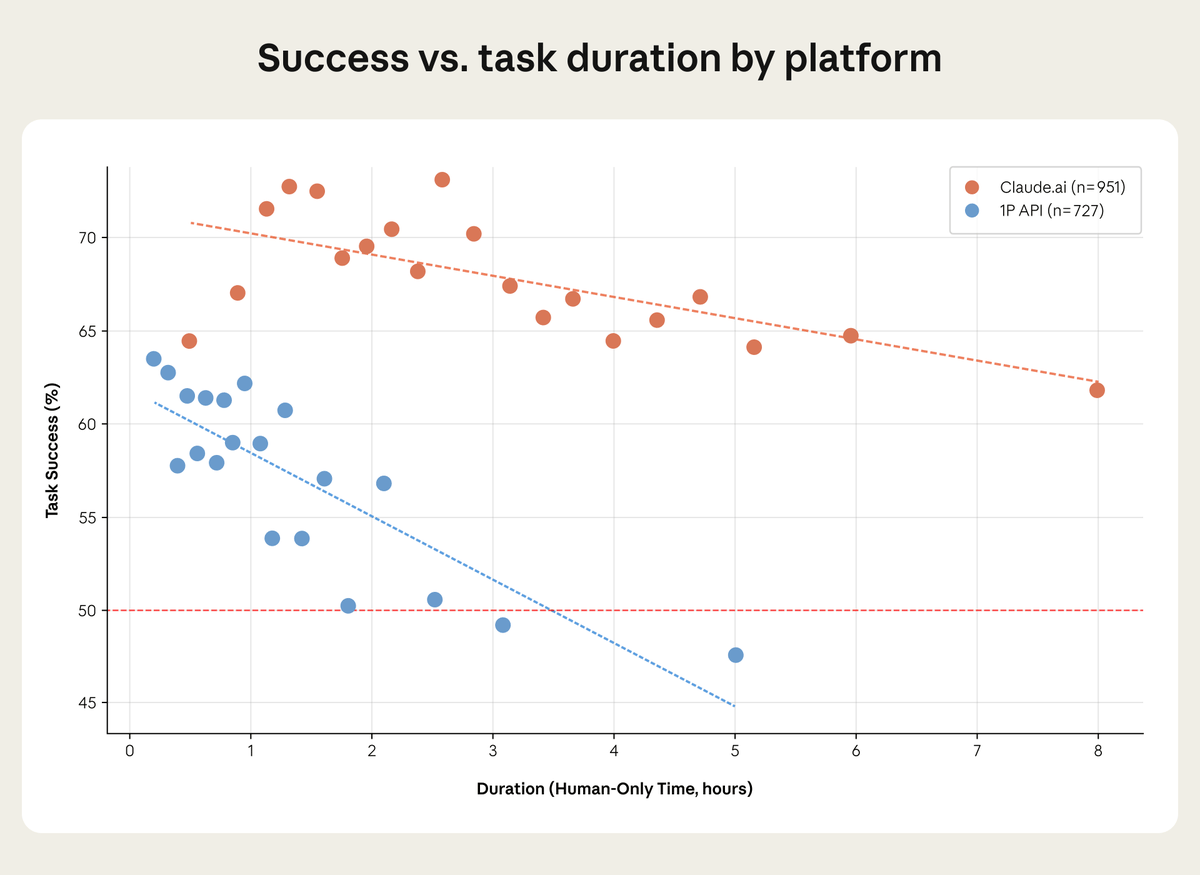

why this matters: Anthropic just dropped the fourth edition of their Economic Index—a report tracking how people actually use ol’ Claude irl. Buried in there is this chart:

Look at the two lines.

The orange dots are people chatting with Claude directly, iterating on tasks, watching the output, and course-correcting as they go. The blue dots are API users—developers building more autonomous systems where Claude runs with less human oversight.

As tasks get longer + more complex, the API success rate craters. It drops below 50% around the three-hour mark and keeps falling. But Claude? The orange line dips too, but at much, much less. Even on eight-hour tasks, success stays above 60%.

The gap between those two lines is the entire value of keeping people involved in work. The person acts as a manual harness—catching small failures before they grow into massive issues, nudging the AI back on track when it drifts, iterating toward success instead of hoping for it on the first try (one-shotting).

armchair philosophy: There's something almost paradoxical about this moment in AI. Everyone's racing toward full autonomy. The dream is agents that run unsupervised—systems you can point at a problem and walk away from. And sure, that's coming.

But the AI that's actually working in the present has a human backstop somewhere.

Capability = what the model can do.

Reliability = what it actually delivers when the stakes are real.

The gap between these concepts is where most AI projects die.

A human-in-the-loop approach closes that gap by making the system's failures recoverable.

apply it: This is exactly what Vercel did with their lead agent. The AI handles everything—research, scoring, routing, and even drafting responses. But nothing goes out without a human review.

🎙️ Speaking of humans in the loop—that's exactly what we're breaking down this week on Human in the Loop, our free live webinar where operators, builders, and AI leads get into what’s driving profits. This week: AI agents for dummies. RSVP here.

Open roles:

AI Strategist

Talent Acquisition Lead

Technical Recruiter

Forward Deployed Engineer

Applied AI Engineer

Engagement Manager

Salary ranges vary by role and experience. Additional comp based on output. Must be NY-based.