⏩ tl;dr

“Build, build, build, build. Just do it. Ship the thing. Don’t talk, just code.” Man, Ryan Carson has a way with words.

How to perform an AI colonoscopy

A Series A CEO’s AI Agent Workshop (RSVP for FREE)

Google DeepMind’s Logan Kilpatrick (RSVP for FREE)

McKinsey’s 2025 AI Report, but in English

RAG. What is it good for?

Reply to this email + I’ll send you your new nickname.

Build an AI Strategy That Doesn’t Suck (+ Delivers ROI)

the problem: We know damn well that using AI in your biz feels like eating an elephant right now. Sometimes you don’t know where to bite first (any tusk people in the house?).

Most teams obsess over tools as execs deal with FOMO (McKinsey breakdown below).

Forget that. The companies winning with AI don’t start with the tools. They begin by securing buy-in, problem mining, and using AI as a tool to build a robust roadmap.

the strategy: Alex and Arman, the peeps behind Tenex and the company that started ultrathink, have run hundreds of these AI colonoscopies for $50B giants + scrappy upstarts.

Put your gloves on, Becky. It's time to avoid 2026 irrelevancy:

get executive buy-in or bust

make the company confess (surveys)

interrogate power users

perform a data exorcism w/ Claude Code

build a roadmap using Notion MCP

The first three steps determine whether AI becomes a profit engine or a pilot scrapyard. The last two, you’ll 100% want to see our complete playbook to understand how problem mining evolves into a tactical company report and roadmap.

get executive buy-in or bust: AI adoption dies in the hands of bureaucracy. You need a bigwig with social currency to become a bulldozer, champion the change, and defend the work.

New hires and mid-levels rarely have the juice. But in rare cases, they can spark change from the ground up (but they need to show a big win w/ scalable returns).

Before anyone starts pitching moonshot AI ideas, you need to be aligned on how AI adoption actually works. Show them these phases:

single-player tools: Give people off-the-shelf AI tools (ChatGPT or Cursor).

single-player processes: The AR person turning bills into invoices, sending them out, then chasing payments. Automate this + save a week's worth of time.

multiplayer, single-function process: Same idea, bigger lane. Take a workflow inside one function (the SDR-to-AE pipeline) + rebuild it end-to-end with AI.

multiplayer, multifunction processes: Cross-functional automation (e.g, performance marketing + creative ops constantly shipping new assets).

pro tip: Stay away from step 4 before crushing phases 1–3. It’s complicated without the foundational layer in place.

make the company confess: People won’t always tell managers what’s broken, but they will tell a survey.

Steal ours. We worked hard on it. Send it out to tenured ICs, mid-level managers, and other operators. The goal is to get at least 30 back.

Each question has a purpose:

Q: List your weekly tasks.

use case: Some tasks just aren’t worthy of people’s time in the age of AI.

Q: What’s your most inefficient and time-consuming task? Explain.

use case: Puts a spotlight on previous attempts at making the task more efficient.

interrogate power users: Skip the one-way mirrors and handcuffs—but record everything. We do this problem mining stage virtually, transcribing everything (crucial for the next stage: Claude Code synthesis).

We use Notion’s AI meeting recorder.

Here’s the spread:

interview 6–12 people for 30 mins each

half execs = understand business priorities, talent mix, org dynamics

half operators = hand-picked rank-and-file employees from the survey

Arman asks the same questions every time (+ personalizes them based on the surveys).

what do you do?

what are you excited about with AI?

what are you skeptical about with AI?

If they flagged “report building sucks” in the survey, go deeper in the call.

“You mentioned reporting takes forever—walk me through the exact steps. How many hours a week? Who else touches it? What breaks?”

➿ Enjoying the outline? Grab the full playbook of the exact $50K process (plus the steps that turn all surveys and interviews into an undeniable roadmap).

Human in the Loop — Upcoming Live Events

How a Series-A CEO Runs His Company Without Meetings

YC-backed founder. Operating in the real world, not AI-Twitter fantasy land. Built an AI command center that kills 80% of meetings and still gives him complete visibility into the pipeline, customers, and product feedback. If you're drowning in 1:1s and status calls, this is the playbook.

Guest: Justin Fineberg

Company: Cassidy

Topic: The AI workflow that replaces meetings with signal

Time: Wed, Nov 12 · 4–5pm ET · Virtual

RSVP for FREE

How a DeepMind Leader Is Shaping the Future of AI Dev Tools

From NASA to Apple to OpenAI—now Google DeepMind. Logan Kilpatrick is behind the push to make the Gemini API the best in the real world. You’ll walk away with a deep understanding of it + a new playbook.

Guest: Logan Kilpatrick

Company: Google DeepMind

Topic: Frontier AI for developers

Time: Tue, Nov 25 · 2–3pm ET · Virtual

RSVP for FREE

McKinsey just dropped its 2025 AI Report, and everyone’s talking about it.

Here’s the big three takeaways, but in English:

adoption ≠ impact

90% of companies use AI, but only 40% see real bottom-line gains.

takeaway: Make something with AI that moves P&L. PowerPoint decks aren’t progress. If no one’s rebuilding workflows, your AI task force is just corporate cosplay.

the real winners don’t just sprinkle AI on top

they rebuild workflows (think: the four phases of AI adoption)

“A little AI assist” is the corporate equivalent of putting a spoiler on a Honda Civic and calling it F1.

takeaway: High performers are three times more likely to redesign processes, not just “AI-assist” old ones.

leadership ownership is the cheat code

companies where leaders personally drive AI are also three times more likely to scale it.

takeaway: If execs aren’t in the trenches, working to make AI happen (reworking budgets + pushing forward to get an AI roadmap), your org is cooked.

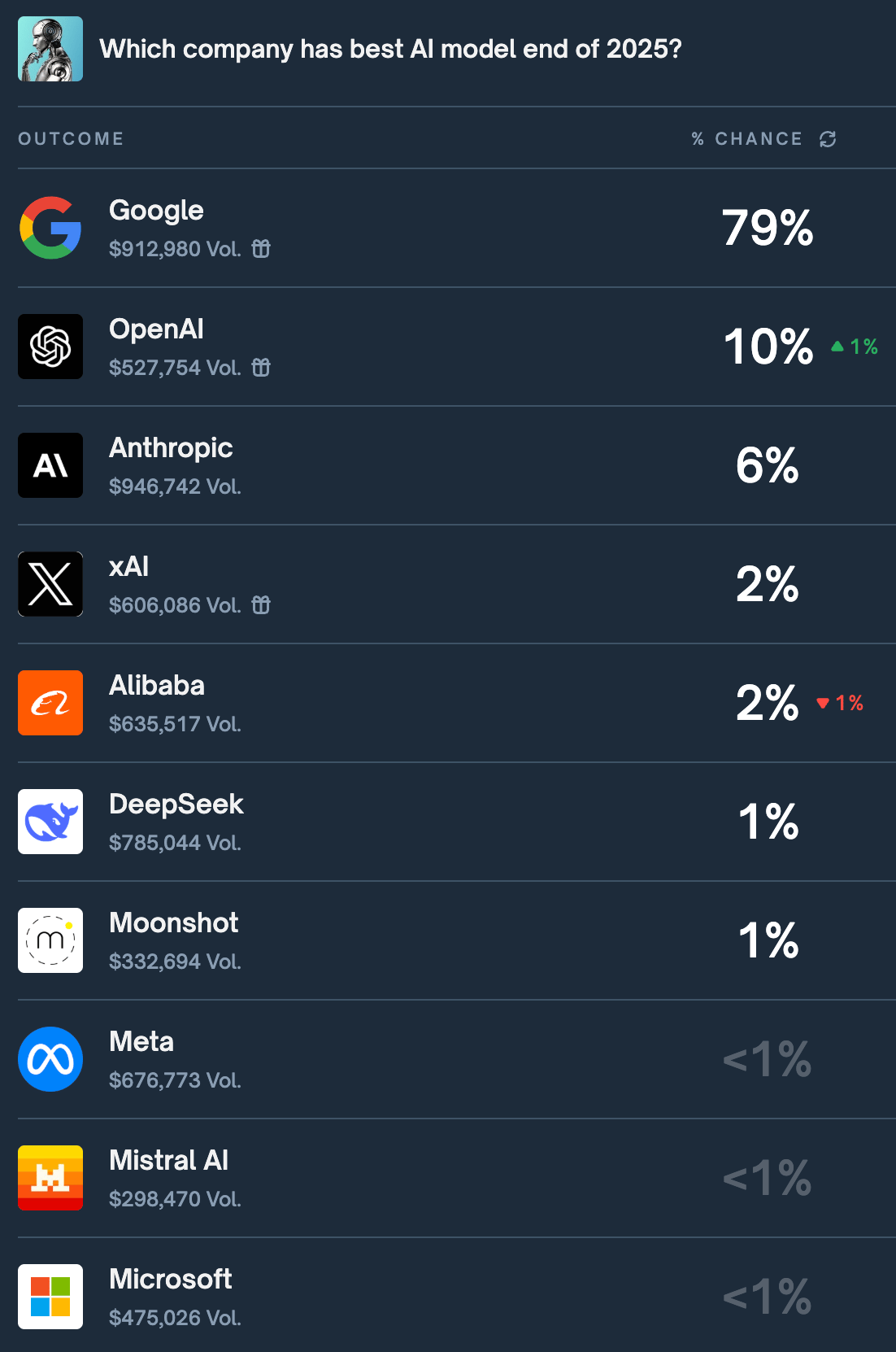

How does Google not win it all once it’s all said and done?

ELI5: They’re owning the entire race.

Not just saying that bc we have Logan Kilpatrick on air with us soon.

Google’s the only company that makes the apps, the brains, the cloud the brains live on, the math to power it, and the chips underneath it all.

Take a look at the current standings on Polymarket’s best AI model of 2025 bet (we see you, Moonshot).

“I built this to keep up with the technical wizards in the office,” Alex Lieberman (Tenex’s cofounder) wrote in our team Slack channel.

Here’s the full prompt:

Objective: Create a comprehensive research report on [INSERT TOPIC HERE]. The goal is to build a deep conceptual understanding of the topic — from its theoretical foundations to its real-world applications — so that I can use this as a launchpad for further exploration.

Audience: A non-technical but intellectually fluent reader. I’m comfortable following complex discussions, but I’m not formally trained in this technical domain.

Tone & Style: - Write in a clear, structured, and explanatory style. - Include technical depth, but translate every piece of jargon into plain English. - After each complex term, formula, or mechanism, provide: a) A plain-language translation (explain it like you’re teaching an intelligent layperson). b) A real-world, tangible example or analogy that makes the idea concrete.

Content Requirements:

1) Foundations Section - Define the core principles, vocabulary, and historical context behind [TOPIC]. - Explain why this field exists, what problems it solves, and who pioneered it. - Use simple examples to show the basic mechanics at play.

2) Core Concepts & Mechanics Section - Dive into the key theories, processes, or frameworks that make up the topic. - Introduce any math, algorithms, or scientific models central to the field. - For each technical concept, pair the explanation with: a) A plain-language breakdown. b) A real-world illustration (e.g., from everyday life, business, nature, or technology).

3) Applications & Implications Section - Show how [TOPIC] is applied in real-world systems, industries, or technologies. - Include notable case studies or examples that demonstrate its impact. - Explain why understanding these concepts matters — what it enables or changes.

4) Integration & Broader Context Section - Connect this field to adjacent domains (e.g., how it interacts with math, physics, biology, economics, etc.). - If relevant, trace how the theory translates into practice (e.g., from code → circuits → behavior). - Highlight open questions or ongoing research frontiers.

5) Formatting & Accessibility Guidelines - Use clear headings, subheadings, and summaries at the end of major sections. - Define jargon inline, not in a glossary. - Use metaphors, analogies, or thought experiments liberally. - If helpful, include short “mental models” or “rules of thumb” to aid intuitive understanding.

Output Goal: A research-style explainer (typically 3,000–5,000 words) that is educational, accessible, and intellectually rigorous — something that helps a curious but non-specialist reader gain a working, conceptual mastery of [TOPIC].

background: Google rolled out a new File Search system in the Gemini API—essentially pushing Retrieval-Augmented Generation (RAG) into the mainstream by making it automatic instead of a developer chore.

in plain English: Less hallucinations. You know when you ask ChatGPT to reference something from a file you uploaded days ago and it responds with confidence—and gets it completely wrong?

That’s because large language models are brilliant pattern-matchers by definition. They are not trained in the ancient ways of the Dewey decimal system. RAG is the mechanism that gives them an actual reference system instead of hoping they “remember.” Now Gemini got the upgrade.

Think of RAG like this:

Your company already has answers—they're just scattered in:

Google Drive

Notion docs

PDFs on your laptop

HR handbook

Emails

Pricing sheets

Policy docs

Onboarding guides

SOP folders no one opens

Random “final_v7_REAL_FINAL.docx” files

Normally, when you ask AI a question, it guesses based on its training data. With RAG, the AI literally goes and reads your real files first—then answers.

So instead of:

“How many PTO days do we get?”

AI (guessing): “Most companies give 10–15 days.”

You get:

“How many PTO days do we get?”

AI (with RAG): “You get 20 days. Page 4 of your HR handbook. Here’s the line.”

the point: For a year, RAG lived in GitHub repos and machine-learning Ted Talks. Today, it’s moving into the “normal feature” phase.

First, Microsoft shipped it in Copilot (not a single person hates it)

Now, Google baked it into Gemini

OpenAI and Anthropic already support retrieval workflows

And open-source stacks like LlamaIndex and LangChain do too

apply it: Anywhere your team currently says “where’s that doc,” you probably have access to a RAG-assisted helper at this point.

Build your AI engine. Win the next decade.

Tenex, the team behind this awesome newsletter, helps companies architect, staff, and ship real AI systems that move the P&L, not the hype cycle.

Get started →