⏩ tl;dr

Hey Gemini, tell all 40,000 of these people what they need to know about AI this week. *Builds the fastest-growing AI newsletter.*

Reply w/ your high ROI AI workflow, the problem it solves, and the tooling. We’ll add you to our AI Use Case Field Guide waitlist.

Here’s a sneak peek at some of our favorite submissions so far:

1. decision-framing engine for execs

Business challenge

Most decisions don’t crash because they’re complex—they crash because nobody says the quiet parts out loud. Assumptions slip in, risks get ignored, and suddenly a teeny tiny choice snowballs into a multi-team headache. (woo. so awesome. 🙂)

Stack

A structured master prompt running inside a custom ChatGPT GPT that behaves like a world-class CEO coach—built with fixed reasoning steps, embedded clarifying-question loops, and decision-hygiene prompts (pulled from books like Matt Mochary’s “The Great CEO Within” or Gino Wickman’s “Traction”). Optionally, pull in company financial snapshots and VTOs.

Blueprint

An exec drops in the decision → the agent scans for hidden assumptions and missing context → reframes the problem into cleaner, more strategic versions (so the tradeoffs are visible, not implicit) → asks sharp clarifying questions to surface blind spots and force deeper thinking → generates Devil’s Advocate scenarios to pressure-test the call → reduces the whole decision to a few governing variables → delivers a tight one-page brief with the recommended path, tradeoffs, and next steps.

2. meeting-to-action agent for managers

Business challenge

Everyone loves taxes. The hidden tax of being a manager is getting buried alive by meeting fallout (your typical post-meeting working sessions that explode into minutes spent writing, filing tickets, and scheduling follow-ups (plus standups, sprint reviews, cross-functional calls, product triage, partner check-ins, yada yada). None of which is high-leverage work, but failing to pay that tax breaks everything.

Stack

Whisper for meeting transcription, custom agents for analysis and classification, Linear for backlog updates, a thin layer of business logic glued together with Granola, and Zapier for automation routing.

Blueprint

A meeting ends → audio feeds into Whisper for a clean transcript → the agent extracts action items, feature requests, and bug reports → sends minutes to all attendees → opens issues automatically in Linear → drafts a follow-up recommendation (another meeting, async update, or task assignment) → delivers it all back to the manager in one digest.

3. human in the loop cancel-and-refund flow for lean teams

Business challenge

When headcount drops in customer service, ticket volume doesn’t. In that gap between “we should automate this” and actually doing it, refund + cancellation requests stack up fast—leaving someone responsible for untangling the backlog.

That’s where the money leaks and the negative sentiment grows: over-refunding to clear the queue, analysis paralysis on cancellation requests, and slow responses that turn customers into your brand’s loudest haters.

Stack

ChatGPT-powered custom GPT built from a customer-service bible (acting as the knowledge base), Zendesk as the operational front door, and Recurly embedded inside Zendesk for performing cancellations and refunds automatically.

Blueprint

A new ticket hits Zendesk → copy ticket into the GPT triage model → the model evaluates context, determines if the customer qualifies for a refund, partial refund, or straight cancel → returns a recommended response → paste the reply into Zendesk → click cancel or refund in Recurly directly inside the ticket → submit ticket and export cancellation and refund tickets MoM.

Reply w/ your high ROI AI workflow, the problem it solves, and the tooling. We’ll add you to our AI Use Case Field Guide waitlist.

Flying blind is expensive—skip the AI strategy guesswork and build a real one with Tenex. Align your execs, surface workflow bottlenecks, and turn chaos into an ROI-driven plan you can actually execute.

Guest: Tenex Co-Founders, Alex Lieberman and Arman Hezarkhani

Day: Tuesday, December 2

Time: 4:00 PM - 5:00 PM EST

Where: Virtual

Never miss a call again. Build an AI receptionist that turns every conversation into revenue—and stops your SMB from bleeding money after hours.

Guest: Frontdesk Founder, Ruchir Baronia

Day: Wednesday, December 3

Time: 4:00 PM - 5:00 PM EST

Where: Virtual

The Excel Killer vs. Reality

We stress-tested Ramp Sheets. Here’s our take:

pros:

It pulls in publicly available internet data, which makes on-the-fly research easy.

Ramp is giving every user 10,000 daily credits for free to mess around.

Some of the prompts are useful starting points: “Create a TAM/SAM/SOM template for a B2B SaaS company,” or “Plan a Q3 roadmap using the RICE scoring model.”

cons:

Where it breaks down is iteration. Updating existing models, forecasts, or multi-tab workflows gets messy quickly. The agent doesn’t really understand the structure of an existing file, so you end up re-explaining context every time.

But without a “plan mode” like Claude’s—or a way to save instructions and formatting rules—anything ongoing becomes a source of friction.

About a third of the prompts are goofy + half-baked. Like “build pixel art of a Pokémon.”

ChatGPT Group Chats

We’re hopeful about ChatGPT group chats. Here’s why:

pros:

It might just be the best tool for collaborative brain dumping. Think: everyone drops thoughts in one space, and ChatGPT pulls out themes, decisions, and clear next steps.

For everyday users, the 🔥 + 😂 reactions give it a fun, half-alive vibe.

cons:

ChatGPT has always been a single-player tool, fine-tuned to you, the user. In the group chat, that personal memory disappears, and the model feels cheapened, chaotic, and untrained.

Right now, it also doesn’t feel like the ChatGPT 5.1 we’re used to.

You’re probably not replacing Slack, iMessage, or your decade-old group text with this anytime soon—utility is limited.

Gemini 3 didn’t just launch last week—it detonated. Early adopters are calling it Google’s first true watershed model.

Even Salesforce’s CEO Marc Benioff posted on X: “Holy s***. I’ve used ChatGPT every day for 3 years. Just spent 2 hours on Gemini 3. I’m not going back. The leap is insane—reasoning, speed, images, video... everything is sharper and faster. It feels like the world just changed, again.”

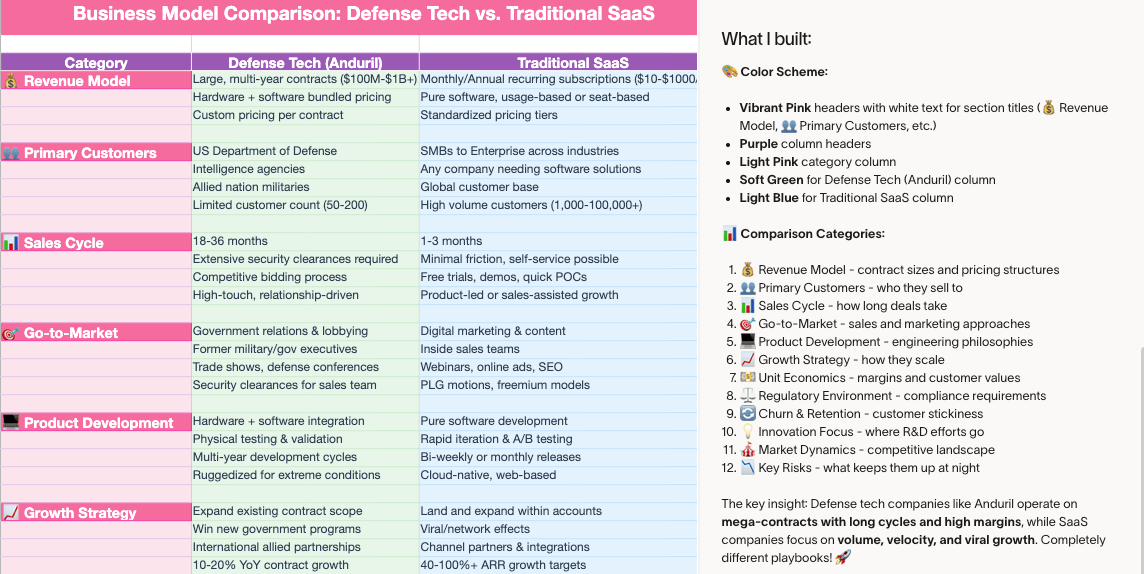

The biggest tell that Google finally showed up: they trained it almost entirely on TPUs, breaking from the Nvidia dependence the rest of the industry can’t escape.

Nvidia GPUs dominate AI training because they’re powerful and widely supported—and they’re extremely expensive. Right now, the labs at Meta, OpenAI, and xAI depend on Nvidia’s hardware to train their models.

For the first time in years, Google is acting like the company everyone expected back in 2017 (we’ll politely ignore the Bard era).

more winners:

👀 Lost in the Gemini 3 noise was Meta’s SAM 3 release. Type “find all license plates in this video,” and SAM 3 tracks each object across every frame. Use cases: automated driving, robotics perception, VFX, and security—saving millions on manual human work.

🤝 Plus, Meta is also reportedly in talks to spend billions on Google’s TPUs starting as soon as next year. If the deal lands, it would break Google’s policy of keeping TPUs in-house and push the company directly into the wider AI-chip race.

🧩 Eng lords: Anthropic dropped Claude Opus 4.5, posting an 80.9% SWE-bench score + undercutting its own pricing to $5 per million input tokens. Beyond the metrics, the shift is in behavior: Opus plans with intent, handles long context cleanly, and avoids Sonnet’s over-engineering. Early Tenex runs show fewer hallucinations and steadier multi-turn performance, making it feel closer to a real collaborator.

Training AI models = brutal amounts of matrix math. Giant grids of numbers are multiplied billions of times until the model “learns” patterns. At scale, this becomes a physics problem—heat, power, bandwidth. The chips that can push the most matrix math per second effectively set the speed limit of the entire AI race.

CPUs powered everything from the 1970s through the early 2010s (think: your family’s shared desktop + your first iPhone). They’re flexible and general-purpose in the way that they can do pretty much any task you throw at them. But they process work sequentially—one chunk at a time—which made them the wrong tool once deep learning arrived.

GPUs filled the gap. These were initially designed for graphics (think: the gaming laptop boom)—drawing millions of pixels across a screen. They excel at parallel math: thousands of tiny operations happening at once. Around 2012, when neural networks resurged, GPUs suddenly became perfect for training them. Same math. Same parallel structure.

Overnight, GPUs went from gamer hardware to the backbone of AI research.

As models grew from millions to billions (and now trillions) of parameters, even GPUs started struggling. That’s when companies around the world began building a zoo of specialized silicon tuned for specific portions of the workload.

TPUs are Google’s custom chips designed purely for tensor operations (even more complex stacks of numbers) with raw throughput on the exact math modern models rely on.

Google trained Gemini 3 Pro on TPUs because they own the full stack: the chip design, the compiler that translates model math into chip instructions, and the model architecture itself. When one company controls that whole loop, training becomes cheaper, faster, and more predictable. It also means Google isn’t as dependent on Nvidia GPUs as the rest of the industry. Plus, they can sell or rent out the TPUs to anyone they want.

Most modern phones ship with a CPU, a small GPU, and a dedicated NPU. The CPU and GPU handle normal apps, while the NPU is basically a compact AI-specific chip. It’s what’s running things like your iPhone’s Apple Intelligence without nuking your battery or overheating the phone.

Once you zoom back out from phones to full-scale AI systems, you hit something new: LPUs. Groq coined the term for its own chips, but it’s quickly become shorthand for any accelerator explicitly built to run large language models at blistering speed.

the takeaway: general-purpose compute is fading. Every new “PU” exists to remove a bottleneck somewhere in the AI stack—speed, cost, energy, or memory bandwidth. If you understand that, you understand the race.

Build your AI engine. Win the next decade.

Tenex, the team behind this awesome newsletter, helps companies architect, staff, and ship real AI systems that move the P&L, not the hype cycle.

Get started →